What Is The Unit Of Electricity?

Electricity is a fundamental force of nature that is all around us. We rely on electricity to power our homes, businesses, and society. But what exactly is electricity? In simple terms, electricity is the movement of electrons that creates energy. This energy allows us to light up rooms, power appliances, run factories, and much more. In order to harness the power of electricity, we need a way to measure it. This brings us to the question: What is the standard unit for measuring electricity?

To understand the unit of electricity, we must first understand the components that make up an electrical system. Electricity is generated and transmitted in the form of electric current and voltage. The flow of electrons through a conductor creates electric current. Voltage is the force that pushes electrons through the circuit, causing them to do work. When current flows against resistance, it results in power being consumed. Therefore, the basic units we need for measuring electricity are amps (current), volts (voltage), ohms (resistance), and watts (power). Over the years, scientists have defined standard units for each of these electrical quantities to enable precise measurement and universal understanding.

In this article, we will explore the history of how these important units were established and why the standards were needed to advance electrical technology. We will also look at the modern International System of Units (SI) used globally today. Understanding electricity requires grasping these fundamental units of measurement.

What is Electric Charge?

Electric charge is a fundamental property of matter that causes particles to interact and produce electrical forces between them. Atoms consist of negatively charged electrons orbiting around a positively charged nucleus. The number of protons in the nucleus determines its positive charge, while the number of electrons orbiting the nucleus determines the negative charge. Atoms are electrically neutral when they contain equal numbers of protons and electrons.

When atoms gain or lose electrons, they become electrically charged particles called ions. Positively charged ions have more protons than electrons, while negatively charged ions have more electrons than protons. Electric charge is measured in coulombs, represented by the symbol C. A coulomb refers to a certain amount of electric charge carried by electrons and protons.

Electric charge is directly responsible for producing electricity. When electric charges move, they create electric currents. The interactions and motions of electric charges are the basis for all electrical phenomena, from lightning to nerve impulses. Understanding electric charge is key to understanding electricity.

Early History

The study of electricity dates back to ancient times when philosophers such as Thales of Miletus observed static electricity from rubbing amber. However, the modern understanding of electricity began in the 18th century with Benjamin Franklin’s famous kite experiment in 1752. Franklin demonstrated that lightning was a form of electricity by flying a kite with a key attached during a thunderstorm. The key accumulated an electric charge from the atmosphere, proving that lightning was an electrical phenomenon.

Around the same time, other researchers like Charles Du Fay were investigating static electricity and identifying positive and negative charges. Du Fay originated the idea of two forms of electricity – resinous for positive charge and vitreous for negative charge. Building on this work, physicists developed early electrostatic units to measure electric charge before the ampere was established.

Ampere and Current

Electric current is the flow of electric charge. It is measured in amperes, named after French physicist André-Marie Ampère. He was one of the founders of the science of classical electromagnetism.

The ampere represents electric current and is defined based on the elementary charge (the charge of a proton). An ampere is the flow of electric charge across a surface at the rate of one coulomb (about 6.24 × 1018 protons’ worth of charge) per second. Electric current is important in electrical engineering, electromagnetism and electronics.

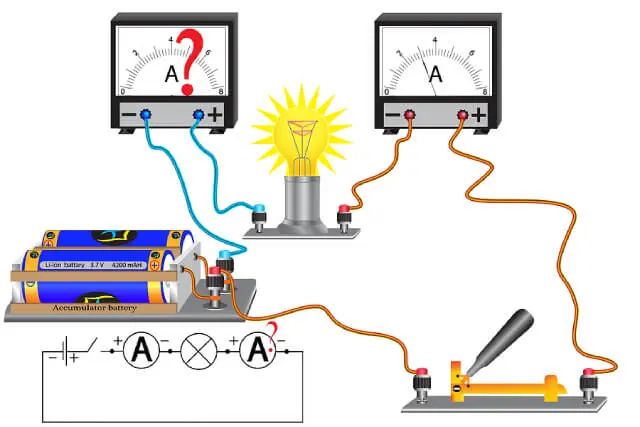

When current flows through a wire or circuit, electrons are moving from atom to atom. The flow of these electrons makes up the electric current. The higher the current, the more electrons are moving through the conductors per second. Current is measured using an ammeter and represented by the variable I.

Voltage

Voltage is a measure of the electric potential energy per unit charge.

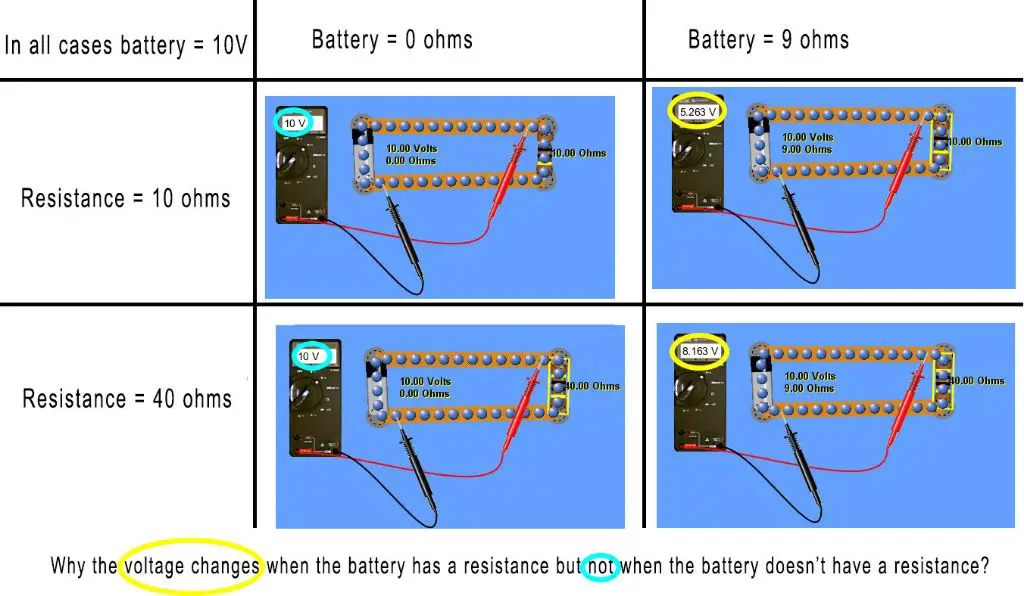

In electric circuits, voltage is the “push” or “pressure” that causes current to flow. For example, a 12-volt battery applies a 12-volt potential difference across the terminals, causing current to flow if a closed circuit is connected.

The standard unit for voltage is the volt (symbol V). One volt is defined as the voltage required to cause a current of one ampere to flow through a resistance of one ohm. So voltage can be thought of as the “force” pushing electrons through the circuit.

Voltage is an important parameter in electrical engineering. It is used to determine the size and capacity of wiring, circuit breakers, power supplies and other components needed for safe operation. The higher the voltage, the more dangerous the electricity can be.

Common household voltages are 120V in North America and 230V in Europe. High voltage transmission lines can operate at voltages in the hundreds of thousands of volts.

Voltmeters are used to measure voltage, while voltage sources like batteries and generators apply a voltage to a circuit.

Resistance

Electrical resistance measures how difficult it is for electric current to flow through a material. Materials like copper have low resistance and allow electricity to flow easily. Materials like rubber have high resistance and restrict the flow of electricity. Resistance is what enables materials to act as insulators against electricity.

Resistance is measured in units called ohms, represented by the Greek letter omega (Ω). An object with a resistance of 1 ohm will allow 1 ampere of current to flow through it when 1 volt of electricity is applied. The higher the resistance, the more the material impedes the flow of electricity.

Ohm’s Law is one of the most fundamental principles in electronics and states that the current (I) flowing through a conductor is directly proportional to the voltage (V) applied, divided by the resistance (R). It is expressed mathematically as:

V = I x R

Where V is voltage, I is current, and R is resistance. For example, if a 5 volt power source runs through a component with 2 ohms of resistance, the current would be 2.5 amps (5V ÷ 2Ω = 2.5A). Ohm’s Law allows the relationships between voltage, current, and resistance to be easily calculated.

Power

Power measures the rate of energy transfer or the rate at which work is done. It quantifies the strength of the electric current in a circuit. The standard unit of power is the watt, which represents one joule of energy transferred per second. For example, if a light bulb converts 100 joules of electrical energy into light and heat over the course of 5 seconds, it has a power rating of 20 watts (100 joules / 5 seconds = 20 watts).

The power dissipated or consumed by an electrical device depends on its resistance and the amount of current flowing through it, which is described mathematically by Joule’s law: P = I^2*R. Where P is power in watts, I is current in amps, and R is resistance in ohms. This equation shows that power increases quadratically as current increases in a fixed resistance. Power also goes up linearly if resistance increases while current remains constant.

Power measures how quickly electrical energy is transferred by a circuit or device. A high-wattage device like a refrigerator uses electricity faster than a low-wattage device like an LED light. Power companies measure customers’ electricity usage in kilowatt-hours (kWh), which represents the amount of energy transferred over time. Understanding power and how it relates to current, voltage, and resistance is key for electrical engineers when designing circuits and systems.

Other Units

While volts, amps, and ohms are the most common units used to describe electricity, there are several other important units to be aware of:

Joule – The joule is the SI unit of energy, defined as the work done by a force of one newton moving an object one meter. One joule is equal to the energy consumed by one watt of power over the course of one second. Joules are commonly used to measure electrical energy.

Coulomb – The coulomb is the SI unit of electric charge. One coulomb is defined as the amount of charge transported by a steady current of one ampere flowing for one second. Coulombs are used to measure the total quantity of electric charge.

Farad – The farad is the SI unit of capacitance. One farad is defined as the capacitance of a capacitor which stores one coulomb of charge when subjected to a potential difference of one volt. Farads measure the capacity of a capacitor or battery to store electric charge.

Weber – The weber is the SI unit of magnetic flux. One weber is defined as the magnetic flux that induces an electromotive force of one volt when it is reduced uniformly to zero within one second in a single-turn coil. Webers quantify the strength of a magnetic field.

While volts, amps and ohms may be the most common electrical units encountered, joules, coulombs, farads and webers play key roles as well in electrical engineering and physics.

SI Units

The SI system stands for the International System of Units and is the modern form of the metric system. It provides a unified and coherent system of measurements that is accepted and used around the world in science, technology, and trade.

The SI is based on seven fundamental units that define the basic measurements of the physical world. These base units include:

- Metre (m) for length

- Kilogram (kg) for mass

- Second (s) for time

- Ampere (A) for electric current

- Kelvin (K) for temperature

- Mole (mol) for amount of substance

- Candela (cd) for luminous intensity

Derived units are formed by combining these base units according to algebraic relations linking the corresponding quantities. This allows the creation of coherent derived units for other quantities like voltage, force, power, etc. Using the SI system unites scientific measurement across disciplines and ensures standardization and precision.

Conclusion

In summary, the standard unit of electricity that we use today is the volt, named after Alessandro Volta. The volt measures the electric potential between two points in a circuit. Other important electrical units include the ampere, which measures electric current, the ohm for resistance, and the watt for power.

Having standardized units for electricity has been crucial for electrical engineering and technology. It allows devices and systems to be designed to certain specifications and performance levels. The volt, ampere, ohm, and watt provide a common language that enables components to work together effectively. As our use of electricity has increased dramatically over the past century, these standardized units have supported innovation and enabled our modern electrical world.