What Is Used To Measure Electricity?

Electricity is an essential part of modern life that powers our homes, businesses, and technologies. To utilize electricity effectively, we need ways to measure and quantify it. Understanding key electrical parameters allows us to design, troubleshoot, and optimize electrical systems and devices. This article provides an overview of the basic quantities used to measure electricity and the tools used to measure them.

Voltage

Voltage is a measurement of the electric potential energy between two points in a circuit. It refers to the “push” or “pressure” of electricity that causes current to flow in a circuit. Voltage is measured in volts and is represented by the letter V.

Volts measure the potential energy available to do work in an electrical circuit. Higher voltage implies greater potential electrical energy. Voltage can be thought of as the electrical “pressure” in a circuit.

Voltage can be measured using a voltmeter, which is connected in parallel across a circuit to determine the difference in electric potential between two points. Voltmeters have different measurement ranges to handle the wide span of voltages in electrical systems, from millivolts in small circuits to thousands of volts in power transmission lines.

Some common voltage levels and their uses:

- 1.5V – AA and AAA batteries

- 3.7V – Lithium ion batteries in consumer electronics

- 5V – USB power adapters, Arduino boards

- 12V – Car electrical systems

- 120V – Household outlets in North America

- 230V – Household outlets in Europe

Current

Current is the flow of electric charge in an electrical circuit. It is measured in amperes (A), after the French scientist André-Marie Ampère who studied electromagnetism in the early 19th century. The ampere represents a flow of one coulomb (a unit of electric charge) per second past a point in a circuit.

Current can be direct current (DC) which flows in one direction, like that from a battery, or alternating current (AC) which changes direction periodically, like from a power plant. Devices called ammeters are used to measure current. They work by detecting the magnetic field produced by the current flowing through the wire. The greater the current, the stronger the magnetic field, which causes a coil in the ammeter to rotate more. This rotation is calibrated to indicate the amperage.

Current is related to voltage and resistance by Ohm’s Law, which states that the current through a conductor between two points is directly proportional to the voltage across the two points, and inversely proportional to the resistance between them. So for a given voltage, increasing the resistance reduces the current, while decreasing the resistance increases the current.

Resistance

Resistance is the measure of opposition to the flow of electric current in a circuit. It is represented by the letter R and measured in units called ohms, symbolized by the Greek letter omega (Ω). The higher the resistance value, the more the resistor opposes the flow of current.

The resistance of a conductor depends on four main factors:

- Material – Metals like copper have low resistance while insulators like rubber have very high resistance.

- Length – Longer conductors have higher resistance than shorter ones.

- Cross-sectional area – Thicker wires have lower resistance than thinner wires.

- Temperature – Most materials increase in resistance as temperature rises.

Ohm’s law describes the relationship between voltage, current, and resistance in a circuit:

Voltage (V) = Current (I) x Resistance (R)

So if voltage and current are known, resistance can be calculated, and vice versa. This relationship is crucial in analyzing and designing electrical circuits.

Power

Power is the rate at which electrical energy is transferred by an electrical circuit. It is measured in watts. Power has an important relationship with voltage and current:

P = V x I

Where P is power (in watts), V is voltage (in volts), and I is current (in amps). This equation shows that power depends on both voltage and current – if you increase either voltage or current in a circuit, you also increase power. Power is an instantaneous measurement – it describes how much energy is being transferred at any given moment in an electrical circuit.

For example, a 60-watt lightbulb uses 60 watts of electrical power to produce light and heat. Appliances like televisions and refrigerators list their power consumption in watts on their nameplates. Understanding power usage is important for managing electrical loads and ensuring circuits are not overloaded.

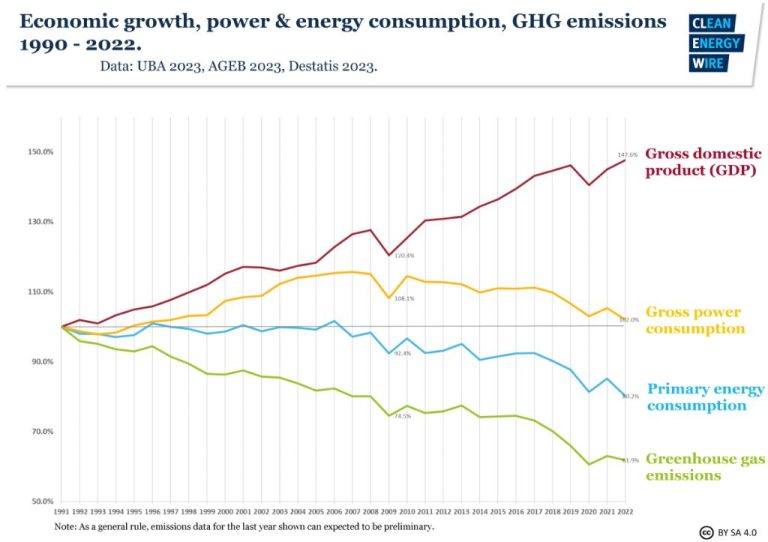

Energy

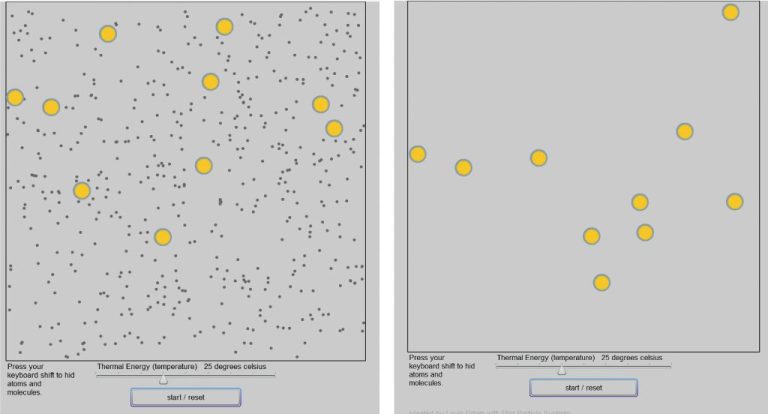

Energy is defined as the ability to do work or produce heat. It is measured in joules, which is calculated as power multiplied by time. For example, a 100-watt light bulb uses 100 joules of energy per second. The key thing to understand about energy is its relationship to power and time.

Power is the rate at which energy is transferred or converted. It is measured in watts. If a 100-watt bulb is left on for 10 seconds, it uses 100 watts x 10 seconds = 1000 joules of energy. Time is the duration over which power is applied. So energy is power integrated or summed up over time.

In electrical terms, energy is the total work done by electric charge carriers. Energy is measured in watt-hours (Wh), which is power in watts multiplied by time in hours. For example, a 60-watt light bulb powered for 5 hours uses 60 watts x 5 hours = 300 Wh of electrical energy.

So in summary, energy is the total amount of work, heat, or electricity delivered over time. Its measurement in joules or watt-hours involves the factors of power and time.

Meters

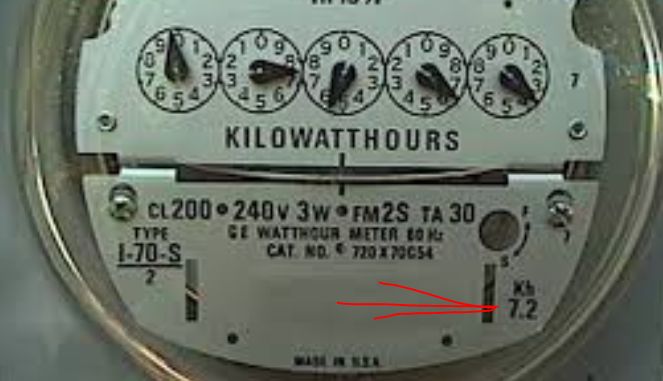

Meters are instruments used to measure and display electricity usage in homes and businesses. There are two main types of electric meters: analog and digital.

Analog meters are the traditional meters that have been used for decades. They have spinning metal disks and clock-like hands that spin to display the amount of electricity being used. The speed of the spinning disk indicates how quickly energy is being consumed. Analog meters must be read manually, usually by a utility worker checking the meter each month.

Digital meters, also called smart meters, are the newest kind of electric meter. Rather than having moving parts, they use sensors and computer chips to digitally record electricity usage data. This data can then be transmitted wirelessly to the utility company. Digital meters allow for remote reading by the utility company, eliminating the need for manual meter readings. They also allow customers to monitor their own energy usage through an online portal or app.

Both analog and digital electric meters measure consumption using the same underlying principles. There are sensors inside the meter that detect the electrical current flowing through the wires. These sensors generate electronic signals proportional to the amount of current, which is then totaled and displayed on the meter readout. The meters keep a running tally of energy use over time for billing purposes.

Measurements

There are various tools and instruments used to measure electricity and its properties. Here are some of the main ones:

Measuring Voltage

Voltmeters are used to measure voltage, which is the electric potential difference between two points in a circuit. Analog voltmeters contain a small coil that deflects a needle across a scale when voltage flows through it. Digital voltmeters use an analog-to-digital converter to provide a numerical voltage reading.

Measuring Current

Ammeters measure the flow of electric current in a circuit. Traditional analog ammeters work similarly to voltmeters, using a coil and needle deflection. Modern digital ammeters convert the current to a voltage and display the reading digitally.

Measuring Resistance

The resistance in a circuit can be measured using an ohmmeter. Simple ohmmeters apply a small voltage and measure how much current flows through the resistance to calculate its value. More advanced devices use a 4-wire connection for improved accuracy.

Measuring Power

Wattmeters are used to measure electrical power consumption. Electrodynamic wattmeters contain coils that interact to produce a reading proportional to voltage and current. Digital power meters sample the voltage and current many times per second to calculate power.

Measuring Energy

Electricity meters track energy usage over time. Traditional electro-mechanical meters use rotary discs that turn at speeds proportional to power. Smart meters record usage digitally and transmit readings to the utility company.

Applications

Measuring electricity is critical in many applications and systems that use electrical energy. Here are some key examples:

-

Homes and buildings: Electricity meters measure energy usage in homes, offices, factories, and commercial buildings. This allows utility companies to bill customers accurately for the electricity consumed. Smart meters provide real-time usage data to help manage demand.

-

Industrial processes: Precise monitoring of electrical parameters enables optimization and control of motors, heaters, electrolysis, lighting, and other industrial systems. This improves efficiency and product quality.

-

Power grids: Measurements of current, voltage, frequency, and power flow are essential for operating and protecting electrical grids. Grid monitoring allows rapid response to faults or instability.

-

Electronics: Multimeters, oscilloscopes, and specialized instruments measure electricity in circuits and components. This assists in designing, testing, and troubleshooting electronic devices.

-

Scientific research: Sensitive electrical sensors enable studying biological systems, material properties, weather phenomena, and particle physics. Precise measurements advance scientific understanding.

Overall, electricity measurement is integral to productivity, efficiency, safety, and innovation across the modern world.

Conclusion

In summary, electricity is measured using several important parameters including voltage, current, resistance, power, and energy. Devices called meters are used to quantify these measurements. Knowing how to accurately measure electricity is crucial for operating electrical devices and systems safely and efficiently. The ability to quantify electrical parameters allows engineers to design, build, and troubleshoot electrical and electronic equipment. Precise electrical measurements also enable consumers and utility companies to monitor electricity usage. Overall, the measurement of electricity underpins the reliable functioning of the modern world.

Measuring electricity is essential for harnessing this invisible but powerful phenomenon. Quantifying voltage, current, resistance, power, and energy has enabled everything from long distance communication to consumer electronics to electric power generation and distribution. The measurement of electricity will continue to be important as societies depend increasingly on electrical and electronic technologies.