What Is The Unit Of A Watt?

A watt is an important and widely used unit for measuring power. Though watts represent a small unit, they are crucial for quantifying energy flow in electrical systems, motors, and other applications. Understanding what a watt is and how to properly utilize it allows for precise calculations and conversions in a variety of fields.

With the rise of electronics and electrical devices over the past century, the watt has become an indispensable unit. Being able to conceptualize power usage and convert between watts and other units grants an intuitive grasp of energy flows. Engineers, electricians, physicists, and even regular consumers benefit from having a working knowledge of watts.

This introduction explains what a watt is in broad terms and emphasizes why it’s an important unit to understand, setting up the more detailed information in the sections to follow. The focus is on briefly introducing the topic and highlighting its relevance.

Definition of a Watt

A watt is the standard unit used to measure power, which is the rate at which energy is produced or consumed. Specifically, a watt is defined as one joule of energy per second. This means that power is a measurement of how quickly energy can be generated or used over time.

For example, a 100-watt light bulb uses 100 joules of electrical energy every second to produce light and heat. The higher the wattage of a bulb, appliance, or device, the more power it consumes.

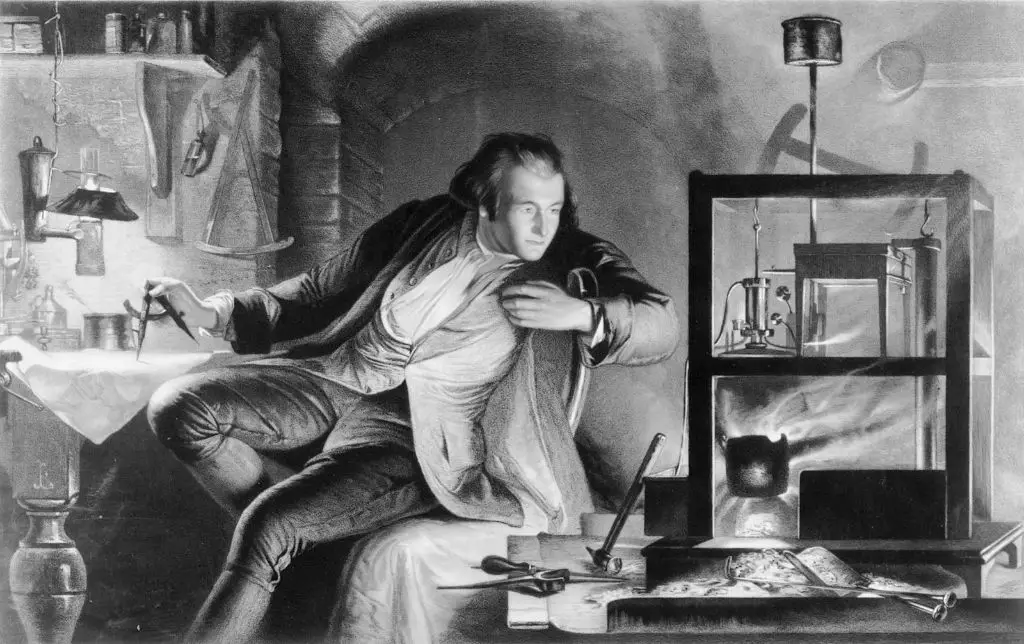

The watt is named after Scottish engineer James Watt, who helped pioneer the development of the steam engine in the late 18th century. His last name was given to the unit of power in his honor.

Overall, watts provide a standardized way to quantify power usage and generation. When energy is produced or used at the rate of one joule per second, that is equal to one watt of power.

History of the Watt

The watt unit was named after Scottish engineer James Watt, who helped develop an efficient steam engine in the late 18th century during the Industrial Revolution. Watt did not actually invent the watt, but his work on improving the Newcomen steam engine was fundamental to the development of power engineering.

In the early 1760s, Watt was hired to repair a model of the Newcomen engine, which was extremely inefficient in its use of energy. Watt analyzed the engine and experimented with improvements, adding a separate condenser that significantly increased efficiency. He patented his design in 1769.

Watt’s separate condenser was a major breakthrough in steam power, allowing engines to become tremendously more efficient than previous models. This paved the way for steam engines to be used for a wide range of industrial applications, from mills to locomotives.

In 1882, the British Association for the Advancement of Science established the watt as a standard unit of power in honor of the major contributions James Watt made to the development of steam power during the First Industrial Revolution.

Using Watts to Measure Power

Watts are commonly used to measure power in electrical and mechanical systems. Here are some examples:

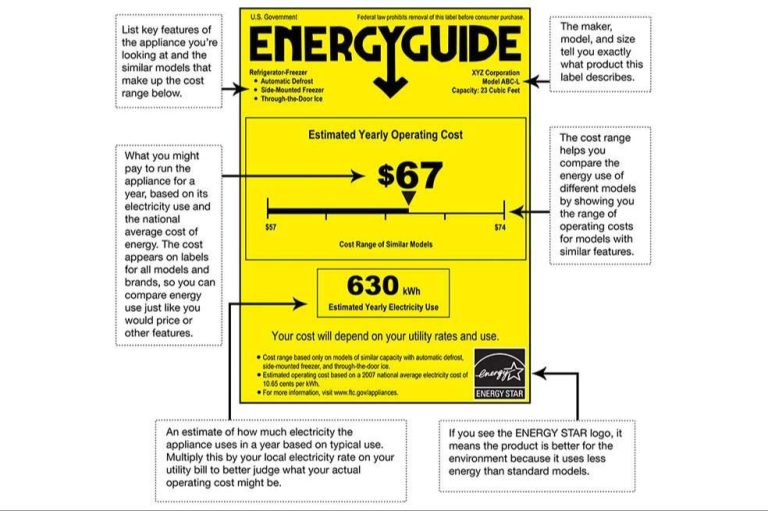

- The wattage rating on electrical appliances indicates how much power it consumes. For example, a 60W light bulb requires 60 watts of electricity.

- Electricity usage in homes and buildings is measured in kilowatt-hours (kWh), which is the amount of energy used over time at a rate of 1,000 watts.

- The power output of vehicle engines is rated in horsepower, which can be converted to watts. A 100 horsepower car engine produces around 75,000 watts.

- Industrial motors, generators, and turbines have power ratings in megawatts (MW) or gigawatts (GW).

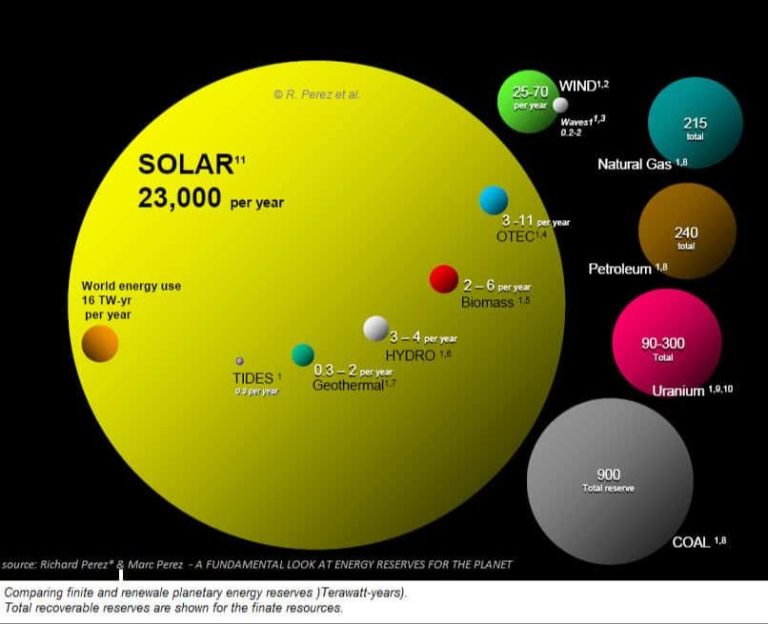

- In photovoltaic solar energy systems, the capacity to generate electricity is measured in watts. A 5 kilowatt solar array can produce 5,000 watts of power.

So in summary, watts are commonly used to rate, measure, and calculate power consumption and production in electrical and mechanical systems across many industries and applications. The watt provides a standard unit for comparing power usage and requirements.

Watt Conversions

When measuring the power consumption of devices or electrical systems, it is common to use different denominations of watts. Here are some common conversions:

Kilowatts (kW)

A kilowatt is equal to 1,000 watts. Kilowatts are commonly used when measuring the power consumption of larger appliances and electrical systems, like electric vehicle chargers, air conditioners, and home energy use.

Milliwatts (mW)

A milliwatt is equal to 1/1000 of a watt, or 0.001 watts. Milliwatts are commonly used to measure the power consumption of smaller electronics like smartphones, Bluetooth earbuds, IoT devices and more.

Megawatts (MW)

A megawatt is equal to 1,000,000 watts or 1,000 kilowatts. Megawatts are typically used to measure the generating capacity of power plants and large-scale electrical systems.

Gigawatts (GW)

A gigawatt is equal to 1,000,000,000 watts or 1,000 megawatts. Gigawatts are used to measure the capacity of very large power plants and national electrical grids.

Understanding these common watt conversions allows us to easily discuss and compare the power consumption across devices and systems of vastly different scales.

Watts vs. Other Energy Units

Watts provide a measurement of power, which is defined as the rate at which energy is transferred or work is done. There are several other common units that are used to quantify energy and power. Here is how watts compare:

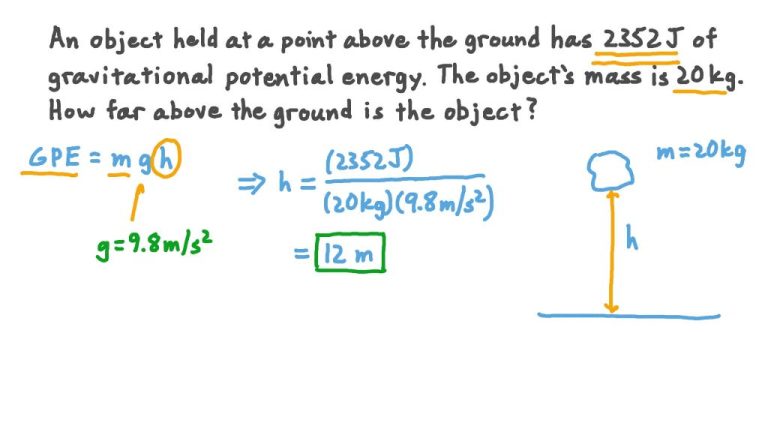

Joules are a unit of energy, while watts measure power. One watt is equal to one joule of energy transferred per second. For example, a 100-watt light bulb uses 100 joules of energy per second. Joules measure total work or energy, while watts measure the rate of energy usage.

Horsepower is another unit that measures power. One horsepower is equivalent to about 746 watts. So a motor rated at 5 horsepower outputs the same power as one rated at 3730 watts. Horsepower is often used to rate the power output of engines and motors.

Kilowatt-hours measure energy consumption over time. One kilowatt-hour is the amount of energy used by a 1,000 watt appliance in one hour. Utilities measure electricity usage in kilowatt-hours. One kilowatt-hour equals 3,600,000 joules of energy.

So in summary, watts measure instantaneous power, joules total work or energy, horsepower mechanical power, and kilowatt-hours energy usage over time. Being able to convert between these units helps compare power requirements for various applications.

Calculating Watts

There are a few key formulas for calculating watts in different scenarios:

Watts (W) = Volts (V) x Amps (A)

This is the basic power formula that shows watts are equal to volts multiplied by amps. For example, if a device uses 120 volts and 2 amps, the wattage would be:

Watts = 120V x 2A = 240W

Watts (W) = Volts (V) squared / Resistance (Ω)

For circuits with a known voltage and resistance, you can use this version of the power formula. For example, if a circuit has a voltage of 110V and a resistance of 50Ω, the wattage would be:

Watts = (110V)2 / 50Ω = 242W

Watts (W) = Amps (A) squared x Resistance (Ω)

This version is used when the amps and resistance are known but voltage is unknown. For example, for a circuit with a current of 5A and a resistance of 25Ω, the wattage would be:

Watts = (5A)2 x 25Ω = 625W

These formulas cover the main scenarios for calculating wattage. Applying the correct formula based on your known and unknown values is key to determining the wattage.

Watt Limitations

While watts are commonly used to measure power, they do have some limitations:

Instantaneous vs Continuous Power – Watts measure instantaneous power at a specific moment in time. But for some applications, the continuous power over time is more relevant. For example, a lightbulb’s power draw when first turned on briefly spikes above its continuous rate.

AC vs DC Power – Watts are typically used to measure AC (alternating current) power. But DC (direct current) power has some key differences, so care must be taken when applying watts to DC circuits.

Reactivity – Watts don’t account for reactive power in AC circuits with inductors or capacitors. So power factor must be considered along with watts for the full picture.

Context Dependence – Watts alone don’t provide enough context to fully understand power consumption. Factors like voltage, current, resistance, battery life, efficiency, and usage patterns also need to be considered.

While watts provide a standardized way to measure power, a more complete understanding requires looking at additional parameters and how power is consumed in real-world conditions.

Practical Applications

Watts are commonly used to measure power in many real-world applications, especially in science and engineering fields. Here are some examples:

-

Electrical devices like lightbulbs, appliances, and electronics are rated in watts to indicate their power consumption. For example, a 60W incandescent light bulb requires 60 watts of power.

-

Photovoltaic solar panels are rated in wattage to specify their power output capacity. A 300W solar panel can produce up to 300 watts of electricity under optimal sunlight conditions.

-

Batteries and power sources are rated in watt-hours (Wh), which measures their total energy storage capacity. A 50Wh phone battery can deliver 50 watts of power for one hour when fully charged.

-

Electric motors and generators have a rated wattage to show their operating power level. A 1500W generator can produce up to 1500 watts of electrical power.

-

In physics, watts are used to calculate power in formulas like P=IV (power = current x voltage) and P=FdV/dt (power = force x velocity).

-

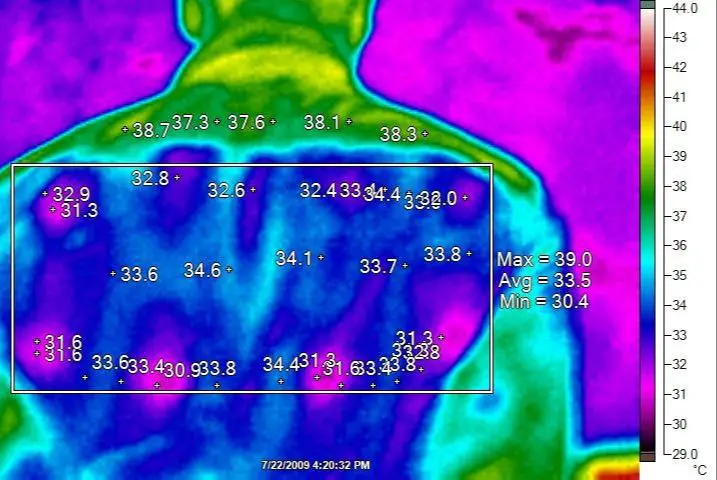

Sound intensity is measured in watts/cm2. Loudspeakers may be rated at 1 W/cm2 to indicate their acoustic power output.

-

Laser output power is quantified in watts. High-powered industrial lasers can have beam power levels exceeding 10,000 watts.

As these examples illustrate, the watt is a practical unit that allows scientists and engineers to precisely quantify power requirements, consumption, and output for a wide range of applications.

Conclusion

In conclusion, the watt is an important unit for measuring power and energy. It allows us to quantify the rate of energy transfer in electrical, mechanical, and thermal systems. Though James Watt did not invent the unit named after him, his work on improving the steam engine greatly increased the usage of power as a concept, leading to the watt becoming a standard unit.

Understanding watts gives us a common way to compare the power usage of devices like lightbulbs, motors, and batteries. It also allows us to calculate the cost of energy consumption by converting watt-hours to kilowatt-hours. The conversions between watts and other energy units further enables analysis across different systems and energy domains.

By learning what a watt truly represents and how to apply it correctly, we can better grasp the power demands of our modern world and more efficiently utilize the energy sources that power our lives.