What Did People Do Before Solar Panels?

It’s hard to imagine life without solar panels today. But just 50 years ago, solar photovoltaic (PV) panels were an exotic technology found only in space programs and remote off-grid locations. For most of human history, people relied on more primitive, inefficient energy sources that often came with unwanted side effects. The rise of solar panels offered the first truly clean, renewable power accessible to the average person.

Before the silicon solar cell was invented in 1954, no one could tap the sun’s nearly limitless energy on their rooftop. Humans had to burn wood, coal, oil, or gas if they needed heat, light, or power. The fossil fuel age changed the world, but also damaged the environment. As solar panel technology improved during the space age, people recognized their potential. With solar, humanity could maintain a modern standard of living sustainably and cleanly. The solar revolution has aimed to fulfill that promise ever since.

Pre-Industrial Energy Sources

Before the widespread use of fossil fuels and machines during the Industrial Revolution, people relied on more basic sources of energy for daily life, work, and manufacturing. These pre-industrial energy sources included human and animal power, firewood, and early wind and hydropower devices like windmills and waterwheels.

Human and animal muscle power provided the majority of mechanical energy in the pre-industrial era. People used their own physical labor for tasks like grinding grain, pumping water, sawing wood, and powering simple machines. Draft animals like horses, oxen, and donkeys were harnessed to plow fields, transport goods, operate mills, and perform other heavy work. It’s estimated that human and animal labor accounted for over 90% of the mechanical power used worldwide before the Industrial Revolution.

Burning wood was the main source of heat energy for heating homes, cooking food, and operating early industries like brick-making, metallurgy, and glassblowing. While energy efficient in terms of production, relying heavily on firewood led to widespread deforestation in parts of Europe.

Windmills and waterwheels provided some of the earliest forms of automation through mechanization. Windmills used the wind’s kinetic energy to automate tasks like pumping water or grinding grain. Waterwheels captured the energy of flowing or falling water to operate mills for processing timber, grain, or cloth. Though limited in scope, windmills and waterwheels were an important precursor to the industrial use of inanimate power sources.

Sources:

https://quizlet.com/150393391/20th-century-world-history-quiz-1-flash-cards/

https://www.slideserve.com/hoyt-vinson/resources

Fossil Fuels

Fossil fuels have been an integral part of human history for centuries. Coal was one of the earliest fossil fuels utilized by humans, with archaeological evidence showing it was used in China as far back as 1000 BCE for smelting and heating purposes (The Complete History Of Fossil Fuels, oilprice.com). The usage of coal gradually increased and spread to other parts of the world, replacing wood as the primary energy source by the 1600s due to its higher energy density.

The rise of the Industrial Revolution in the 18th and 19th centuries led to an explosion in the demand for fossil fuels like coal to power steam engines and machinery. By the late 19th century, petroleum oil became the next major fossil fuel to be harnessed when drilling techniques were developed to extract it from underground reservoirs. Petroleum oil could be refined into various products like gasoline, diesel, and heating oil, making it indispensable for transportation and infrastructure.

Natural gas became another vital fossil fuel in the 20th century, used for heating, electricity generation and as a chemical feedstock. The discovery of large natural gas fields and the advent of shipment methods like pipelines and liquefied natural gas (LNG) tankers allowed natural gas to be transported long distances for consumption.

Overall, fossil fuels have been instrumental in the development of modern civilization, providing abundant energy for transportation, electricity, manufacturing and more. However, their use has also raised environmental concerns due to emissions and climate change.

Early Electricity

The early days of electricity generation focused on hydroelectric power and generators. The first hydroelectric power plant opened in Appleton, Wisconsin in 1882, marking the beginning of harnessing the power of falling water to generate electricity (solarpower.guide). Hydroelectric dams soon began providing electricity to cities and towns across the United States. Generators also played a key role, with Nikola Tesla designing early alternating current generators in the late 1880s (bestpracticeenergy.com). These generators allowed electricity to be transmitted over longer distances.

By the early 1900s, interconnected electrical grids were being developed to supply electricity to broader regions. The first modern interconnected grid was built by Samuel Insull in Chicago in 1907, delivering AC electricity to homes and businesses from power stations (Encyclopedia.com). This early grid became a model for rapidly expanding access to electricity across the country.

Nuclear Energy

The history of nuclear power goes back to the discovery of atomic power in the early 20th century. Initial scientists driving atomic power research included Marie Curie, Albert Einstein, Enrico Fermi and Leo Szilard [1]. During the World War II Manhattan Project, scientists focused on creating atomic weapons, but some recognized that the energy from splitting uranium atoms, called nuclear fission, could be applied for peaceful purposes like generating electricity.

After the war, research began in the late 1940s on developing nuclear reactors that could harness atomic power for energy production. Experimental Breeder Reactor-1 in Idaho became the first nuclear reactor to produce electricity in 1951 [2]. Through the 1950s, many countries invested in nuclear reactor research and building prototypes. The first commercial nuclear power plant opened in 1956 at Calder Hall in the UK.

Most nuclear power plants operate using nuclear fission, where neutrons split the nuclei of uranium fuel rods in a controlled chain reaction. The heat generated from the fission reaction boils water to produce steam that spins a turbine to generate electricity. Nuclear reactors require extensive safety systems to contain radiation and prevent meltdowns. Major nuclear accidents like Chernobyl in 1986 and Fukushima in 2011 raised serious concerns about the risks and highlighted the need for strict operational controls and containment mechanisms.

Renewable Energy Experiments

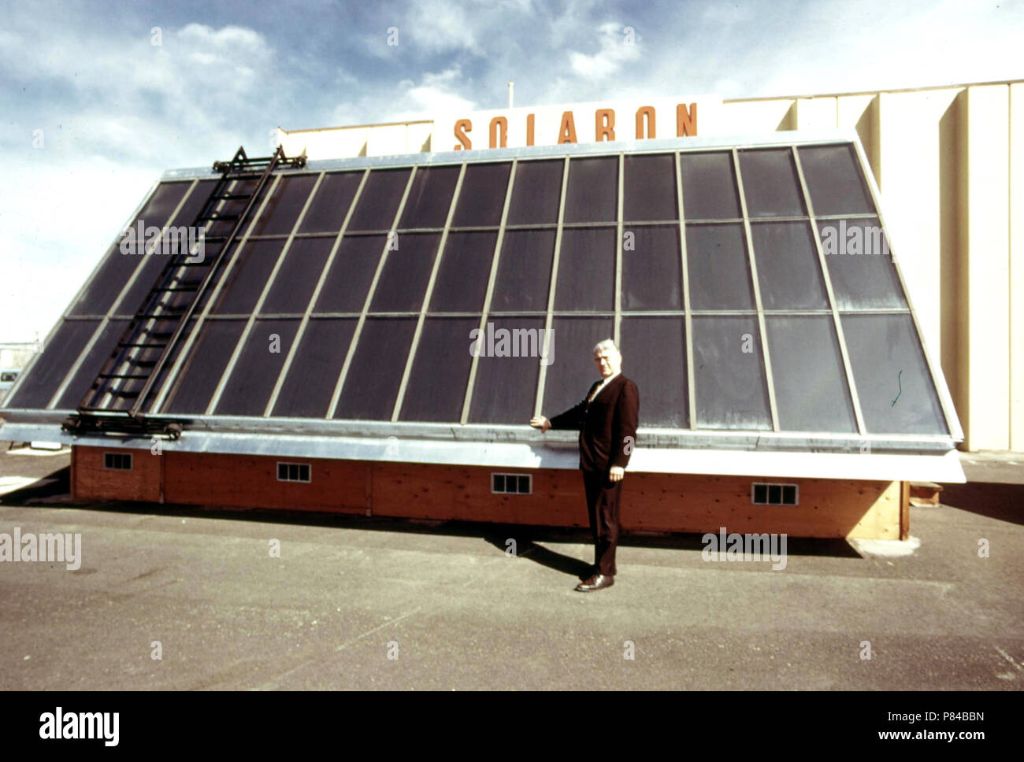

In the 1970s, rising oil prices and growing environmental concerns led some people to begin experimenting with renewable energy sources like solar and wind power. At places like the Arthur Morgan School in Celo, North Carolina and the Institute for Social Ecology’s Cate Farm in Vermont, pioneers attempted to build completely solar-powered homes and facilities, often assembling solar panels themselves.

These early solar panels were expensive and inefficient. But visionaries hoped that further research and development could improve solar technology and make it a viable mainstream energy source. According to Dan Chodorkoff, one of the founders of Cate Farm, their renewable energy experiments were intended to “see if we could build a facility that was self-sufficient and ecological.”

In addition to solar, Cate Farm also relied on a wind turbine, biodiesel generator, and geothermal heating to achieve energy self-sufficiency. But maintaining these systems was labor-intensive. Chodorkoff notes that the biodiesel generator alone required manually gathering used oil from over a hundred restaurants. And the technology still did not fully meet the community’s energy needs.

Nevertheless, these pioneers helped advance renewable technology. Their willingness to invest time and money into solar, wind, and other renewables laid the groundwork for the growth of green energy in subsequent decades.

Source: https://social-ecology.org/wp/2014/05/dan-chodorkoff-origins-ise/

Environmental Impact

Before the widespread use of solar panels, most energy came from fossil fuels like coal, oil, and natural gas. The burning of fossil fuels released large amounts of greenhouse gasses like carbon dioxide into the atmosphere. According to the UCSUSA, “In the United States, electric power plants are the largest single source of the carbon dioxide emissions that are driving global climate change.”

Other pollutants released from burning fossil fuels include sulfur dioxide, nitrogen oxides, particulate matter, mercury, and other heavy metals. These emissions contributed greatly to problems like acid rain, smog, respiratory illness, and ecosystem damage.

Extraction, processing, and transportation of fossil fuels also had major environmental impacts like habitat destruction, groundwater contamination, and oil spills. As the GreenMatch article states: “Mining coal and drilling for oil release methane into the atmosphere as these greenhouse gases are trapped in coal beds and surrounding rock.”

In addition to pollution, the unsustainable use of finite resources like coal, oil, and natural gas was environmentally problematic. Reliance on imports also had implications for geopolitics and energy security.

Development of Solar

The modern solar panel industry started developing in the 1950s, spurred on by the space program. Both the U.S. and Soviet space programs drove research into photovoltaic cells to power satellites and spacecraft (Etransaxle). Silicon solar cells were seen as the best option due to their power, durability, and light weight.

Through the 1960s, solar was still prohibitively expensive for widespread residential use. But research continued, both for space applications and for bringing costs down on Earth. The oil crisis of the 1970s increased interest in solar as an alternative energy source. Government tax incentives helped drive early adoption of solar panels.

Over time, improvements in manufacturing and solar cell technology dramatically reduced costs. New storage solutions like batteries allowed solar power to be used when the sun wasn’t shining. These developments helped make solar energy economically viable for mainstream use.

Solar Goes Mainstream

In the early 2000s, solar technology started to become more efficient and affordable for mainstream use. Some key factors driving this growth included:

Improving efficiency – Solar cell efficiency increased steadily, with modern monocrystalline panels reaching over 20% efficiency. This meant more energy could be produced from the same sized panels.

Government incentives – Countries like Germany, Japan, and the USA introduced generous solar incentives like feed-in tariffs to encourage solar adoption. This helped spur massive growth in installations. [1]

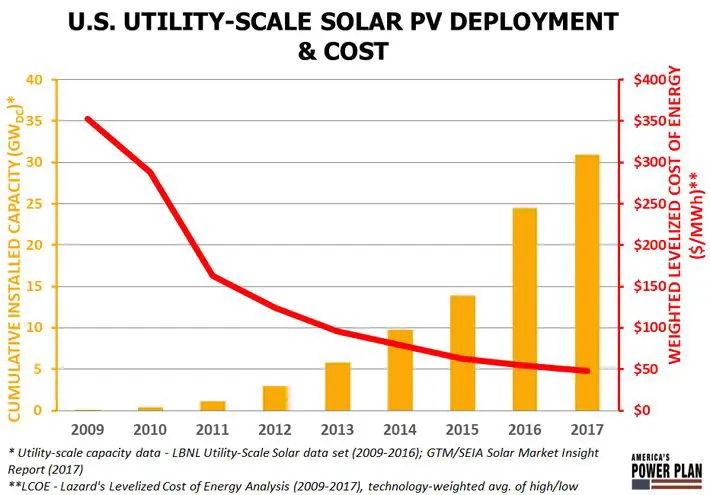

Falling prices – As production scales increased globally, solar panel prices fell dramatically. Between 2008 and 2013, average PV module prices fell around 80%. This made solar electricity cost competitive with fossil fuels in many regions.

With these improvements, solar was no longer a niche technology but became a mainstream energy source. Installations rose from under 5 gigawatts globally in 2005 to over 100 gigawatts by 2012. Households, businesses and utilities all embraced solar as a practical energy solution.

Conclusion

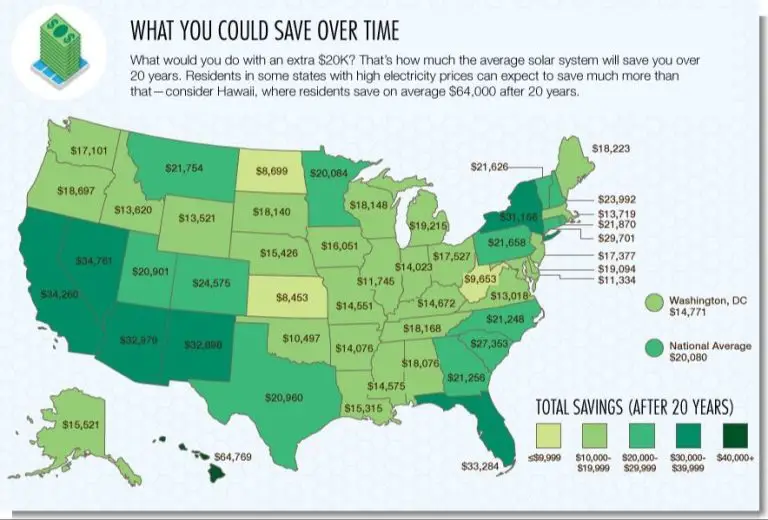

Solar energy has come a long way in a short time. Just a few decades ago, solar panels were rare and expensive. Today, solar panels are an affordable and accessible technology that is commonplace. Over 1 million homes in the U.S. are powered by solar energy. The future of solar is bright. With improvements in efficiency and cost declines, solar energy has the potential to become one of our primary energy sources. Some experts predict that by 2050, solar could provide 20% of U.S. electricity needs. Continued technological advances could make solar panels available to even more people around the world, bringing clean renewable power to new regions. The possibilities are limitless for how solar power might transform our energy landscape in the coming years.